Azure Storage Account

An Azure Storage Account is a secure account, which provides you access to services in Azure Storage. The storage account is like an administrative container, and within that, we can have several services like blobs, files, queues, tables, disks, etc. And when we create a storage account in Azure, we will get the unique namespace for our storage resources. That unique namespace forms the part of the URL. The storage account name should be unique across all existing storage account name in Azure.

Types of Storage Accounts

| Storage account type | Supported services | Supported performance tiers | Supported access tiers | Replication options | Deployment model | Encryption |

|---|---|---|---|---|---|---|

| General-purpose V2 | Blob, File, Queue, Table, and Disk | Standard, Premium | Hot, Cool, Archive | LRS, ZRS, GRS, RA-GRS | Resource Manager | Encrypted |

| General-purpose V1 | Blob, File, Queue, Table, and Disk | Standard, Premium | N/A | LRS, GRS, RA-GRS | Resource Manager, Classic | Encrypted |

| Blob storage | Blob (block blobs and append blobs only) | Standard | Hot, Cool, Archive | LRS, GRS, RA-GRS | Resource Manager | Encrypted |

Note: If you want to use all storage services, we recommend you to go with general-purpose version-2, and in case if you need storage account for blobs only, then you can go with the blob storage account type.

Types of performance tiers

Standard performance: This tier is backed by magnetic drives and provides low cost/GB. They are best for applications that are best for bulk storage or infrequently accessed data.

Premium storage performance: This tier is backed by solid-state drives and offers consistency and low latency performance. They can only be used with Azure virtual machine disks, and are best for I/O intensive workload such as the database.

(So every virtual machine disk will be stored on a storage account. So, if we are associating a disk, then we will go for the premium storage. But if we are using storage account specifically to store blobs, then we will go for standard performance.)

Access Tiers

There are four types of access tiers available:

Premium Storage (preview): It provides high-performance hardware for data that is accessed frequently.

Hot storage: It is optimized for storing data that is accessed frequently.

Cool Storage: It is optimized for storing data that is infrequently accessed and stored for at least 30 days.

Archive Storage: It is optimized for storing files that are rarely accessed and stored for a minimum of 180 days with flexible latency needs (on the order of hours).

Advantage of Access Tiers:

When a user uploads the document into the storage, the document will initially be frequently accessed. During that time, we put the document in the hot Storage tier.

But after some time, once the work on the document is completed. Nobody generally accesses it. So it will become infrequently accessed document. Then we can move the document from Hot storage to Cool storage to save the cost because cool storage is built based on the number of times the document is accessed. Once the document is matured, i.e., once we stopped working on that document, the document becomes old. We rarely refer to that document. In that case, we put it in cool storage.

But for six months or one year, we don’t want the document to be referred to in the future. In that case, we will move that document to archive storage.

So hot storage is costlier than cool storage in terms of storage. But cool storage is more expensive in terms of access. Archive storage is used for archiving the documents into storage, which is not accessed.

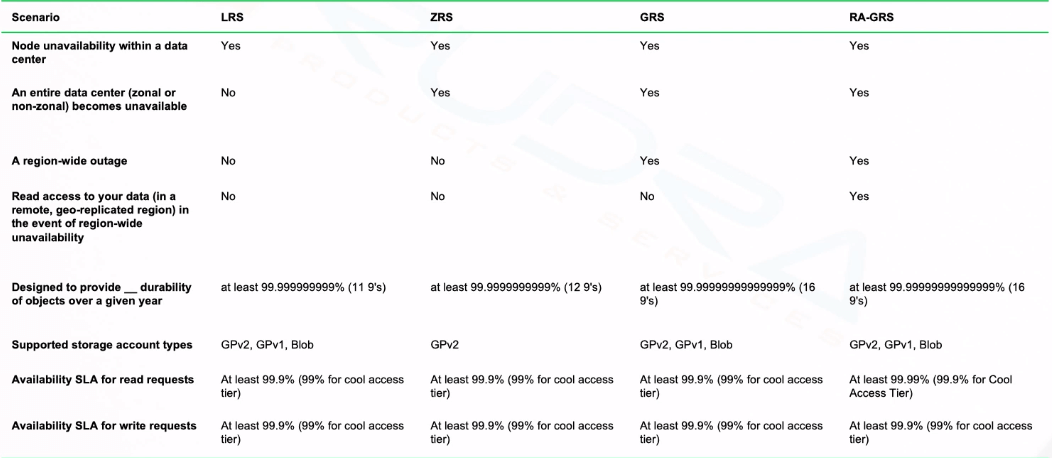

Azure Storage Replication

Azure Storage Replication is used for the durability of the data. It copies our data to stay protected from planned and unplanned events, ranging from transient hardware failure, network or power outages, and massive natural disasters to man-made vulnerabilities.

Azure creates some copies of our data and stores it at different places. Based on the replication strategy.

LRS (Local Redundant Storage): So, if we go with the local-redundant storage, the data will be stored within the data center. If the data center or the region goes down, the data will be lost.

ZRS (Zone-Redundant Storage): The data will be replicated across data centers but within the region. In that case, the data is always available within the data center, even if one node is not available. OR we can say that the data will be available also if the entire data center goes down because the data is already copied in another data center within the region. However, if the region itself is gone, then you will not get the data access.

GRS (global-redundant storage): To protect our data against region-wide failures. We can go for global-redundant storage. In this case, the data will be replicated in the paired region within the geography. And in case if we want to have read-only access to the data that is copied to another region, then, in that case, we can go for RA-GRS (Read Access global-redundant storage). We can get different things in terms of durability, as we can see in this table below.

Storage account endpoints

Whenever we create a storage account, we will get an endpoint to access the data within the storage account. So each object that we stored in Azurestorage has an address, which includes your unique account name and the combination of an account name, and service endpoint, which forms the endpoint for your storage account.

For example, if your general-purpose account name is mystorageaccount then generally the default endpoints for different services looks like:

Azure Blob storage: http://mystorageaccount.blob.core.windows.net.

Azure Table storage: http://mystorageaccount.table.core.windows.net

Azure Queues storage: http://mystorageaccount.queue.core.windows.net

Azure files: http://mystorageaccount.file.core.windows.net

In case if we want to map our custom domain for these, we can still do that. We can use our custom domain in reference to these storage service endpoints.

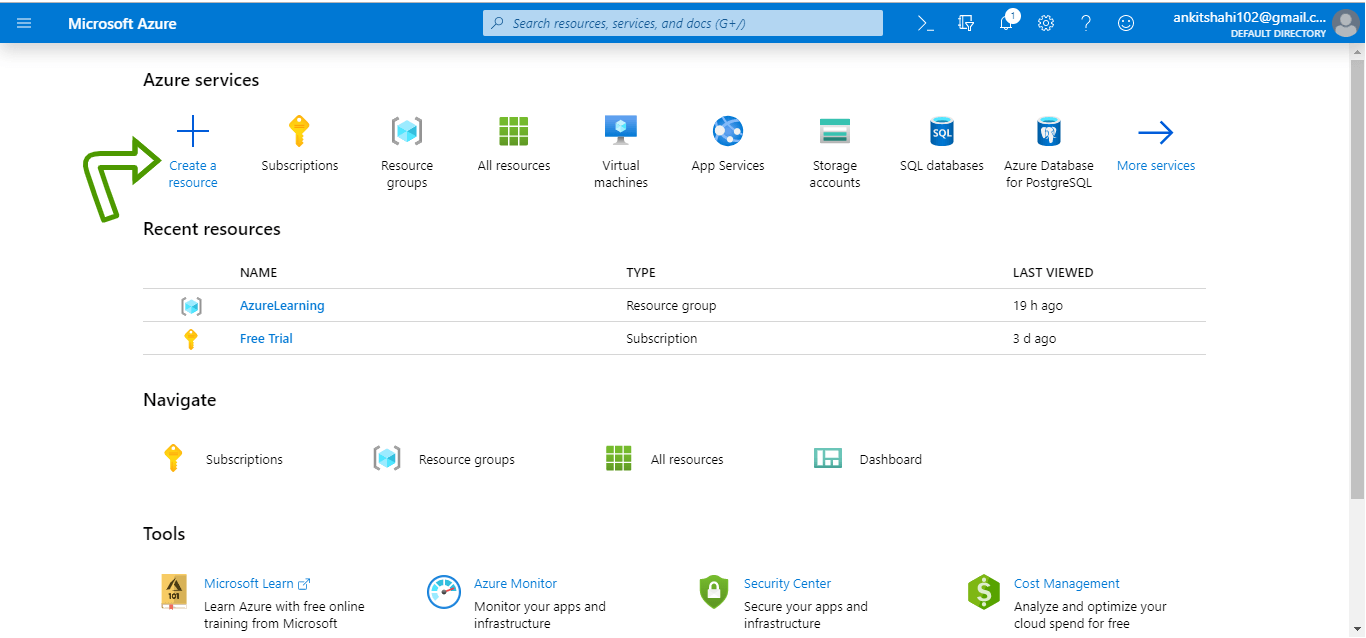

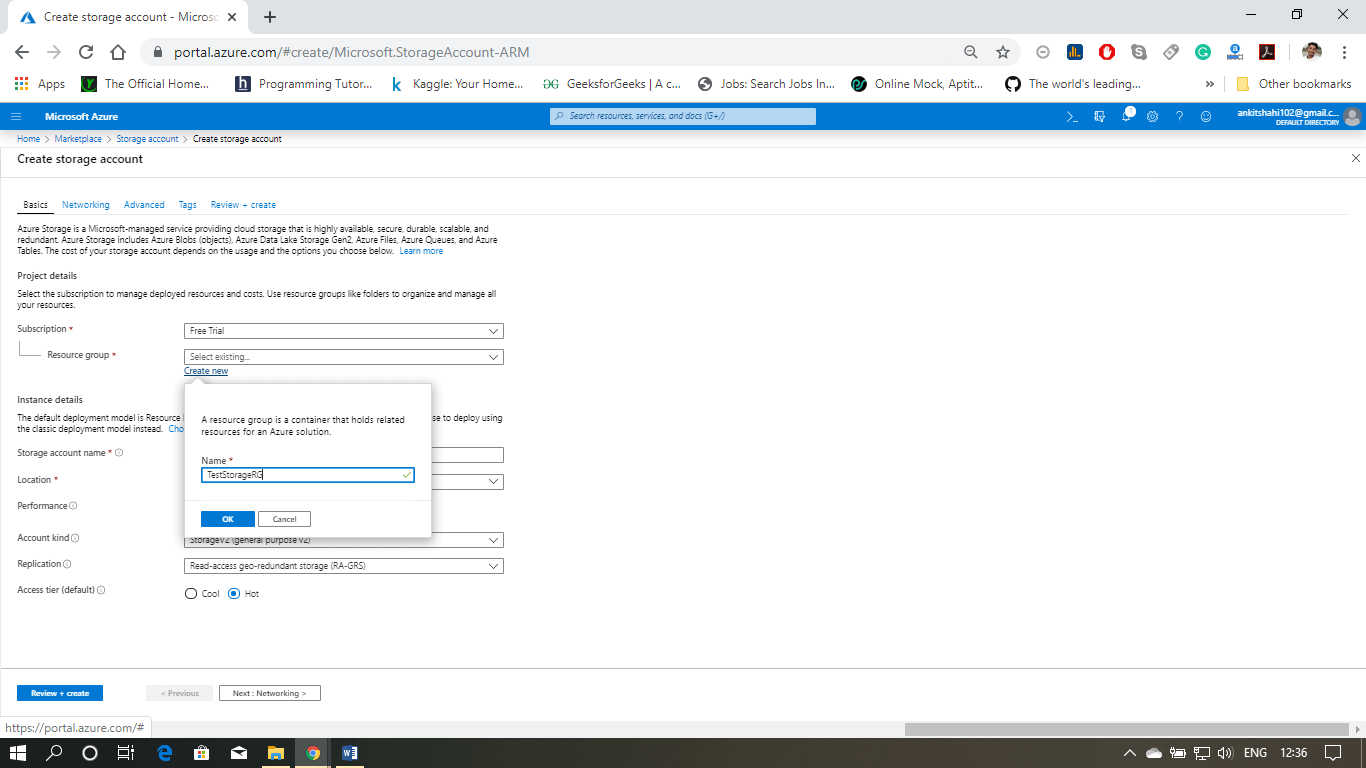

Creating and configuring Azure Storage Account

Let’s see how to create a storage account in Azure portal and discuss some of the important settings associated with the storage account:

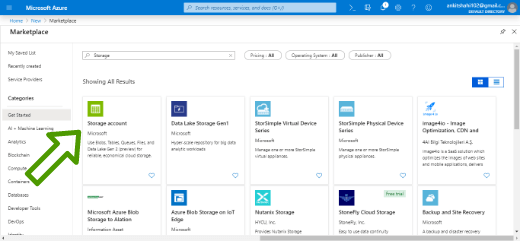

Step 1: Login to your Azure portal home screen and click on “Create a resource”. Then type-in storage account in the search box and then click on “Storage account”.

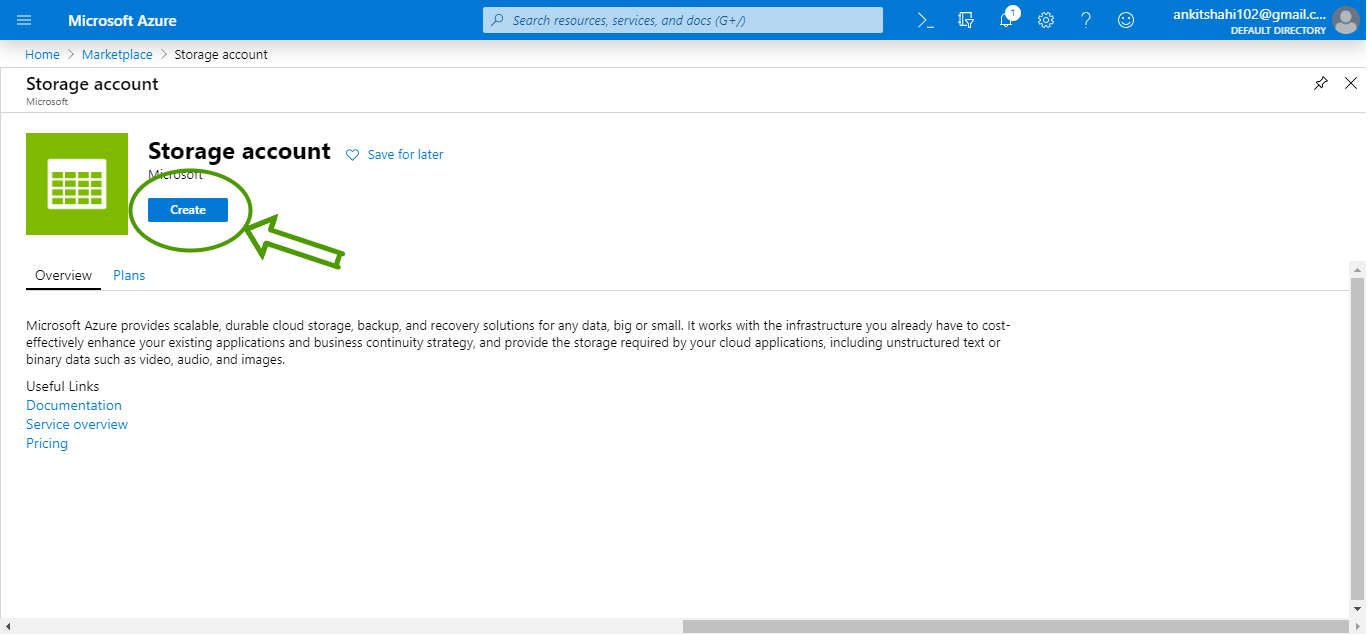

Step 2: Click on create, you will be redirected to Create a storage account window.

Step 3: First, you need to select the subscription whenever you are creating any resource in Azure, and secondly, you need to choose a Resource Group. In our case, the subscription is “Free Trail”.

Use your existing resource group or create a new one. Here we are going to create a new resource group.

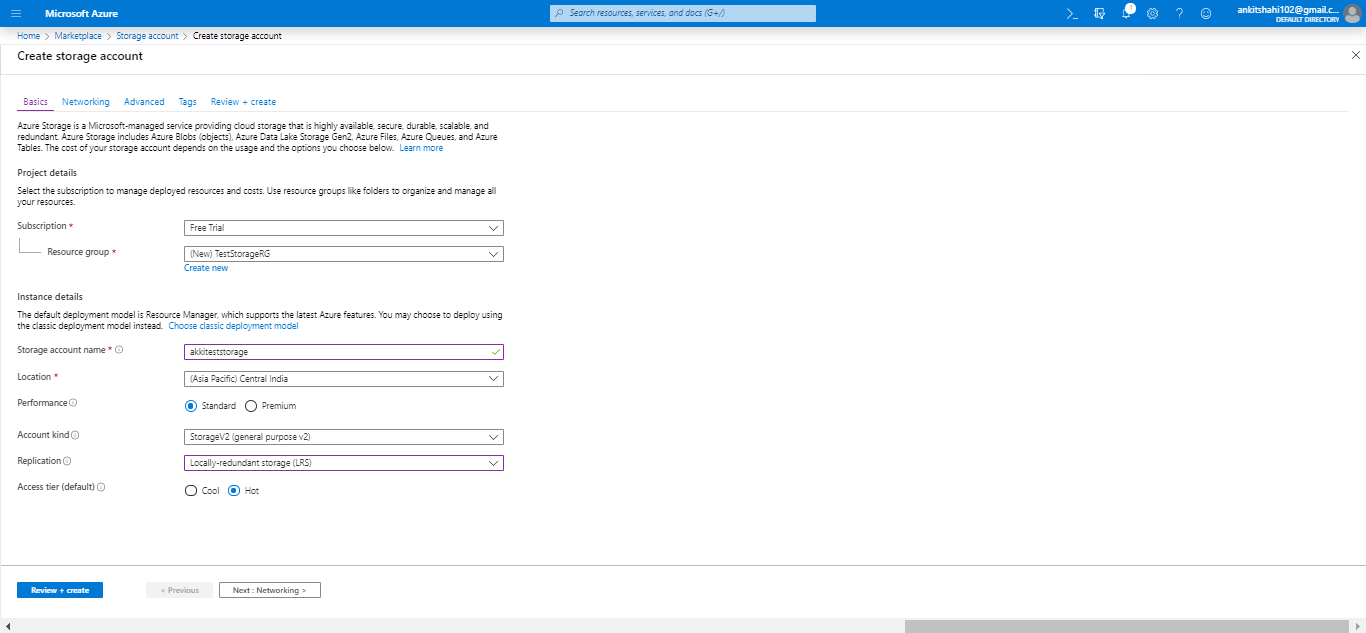

Step 4: Then, fill the storage account name, and it should be all lowercase and should be unique across all over Azure. Then select your location, performance tier, Account kind, Replication strategy, Access Tier, and then click on next.

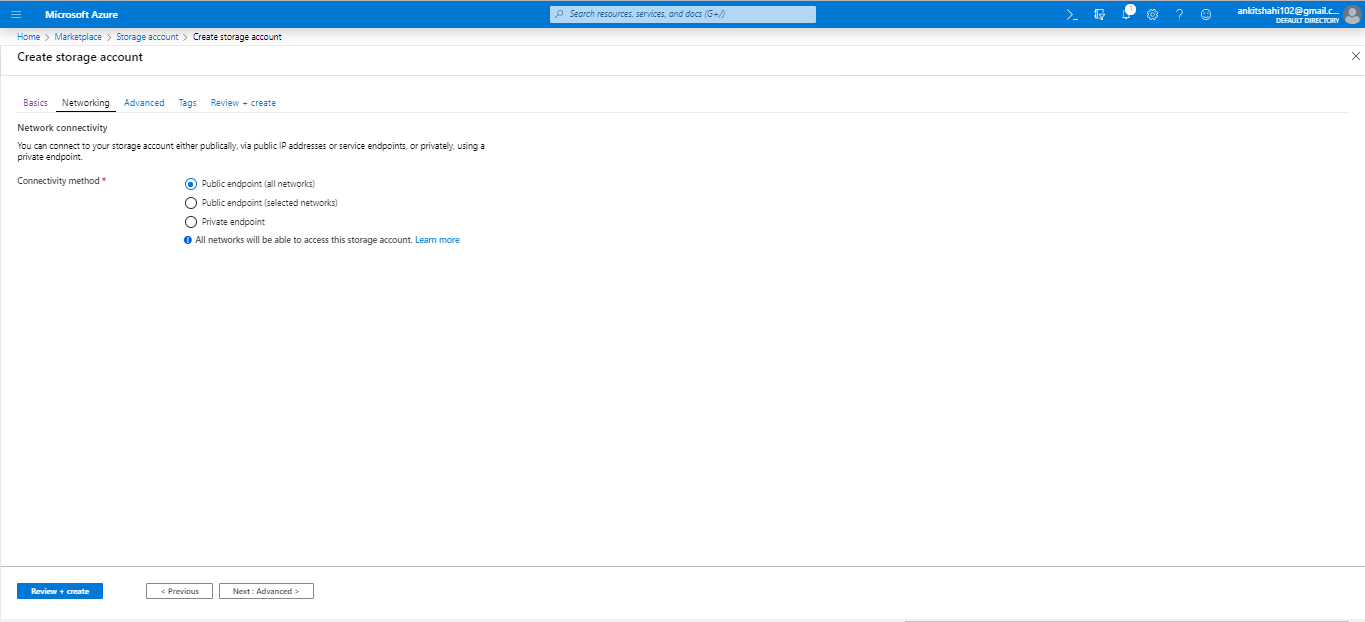

Step 5: You are now on the Networking window. Here, you need to select the connectivity method, then click next.

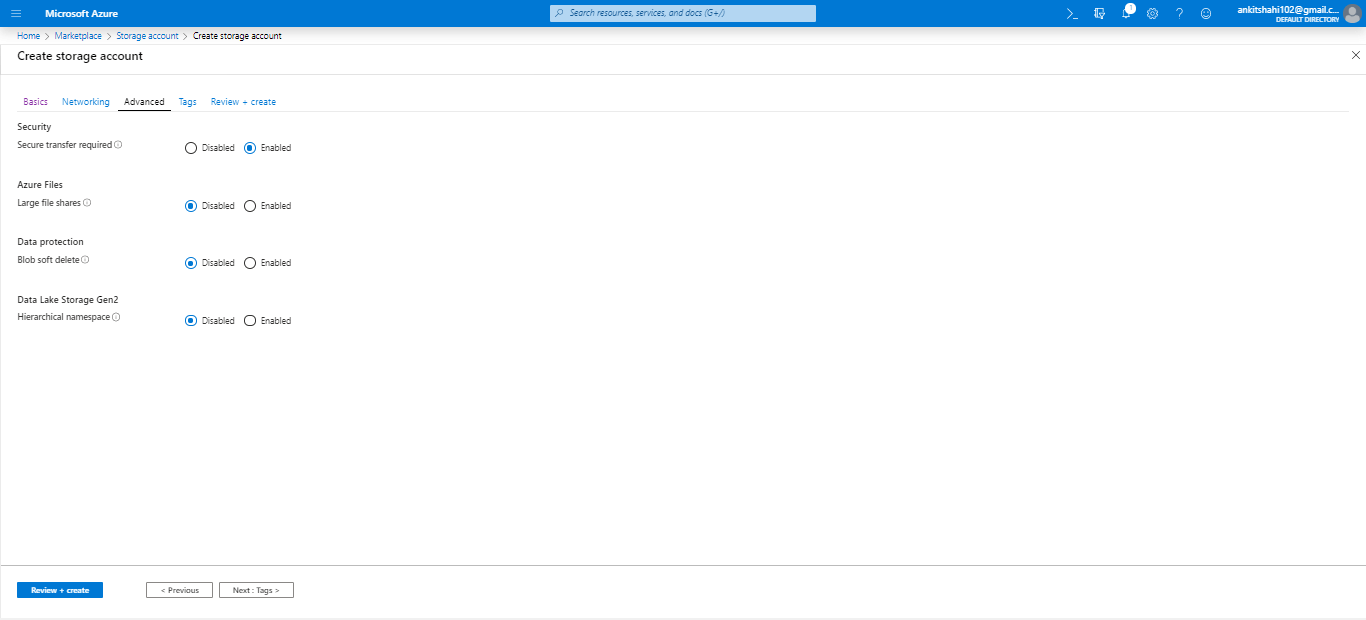

Step 6: You are now on the Advanced window were you need to enable or disable security, Azure files, Data Protection, Data lake Storage and then click next.

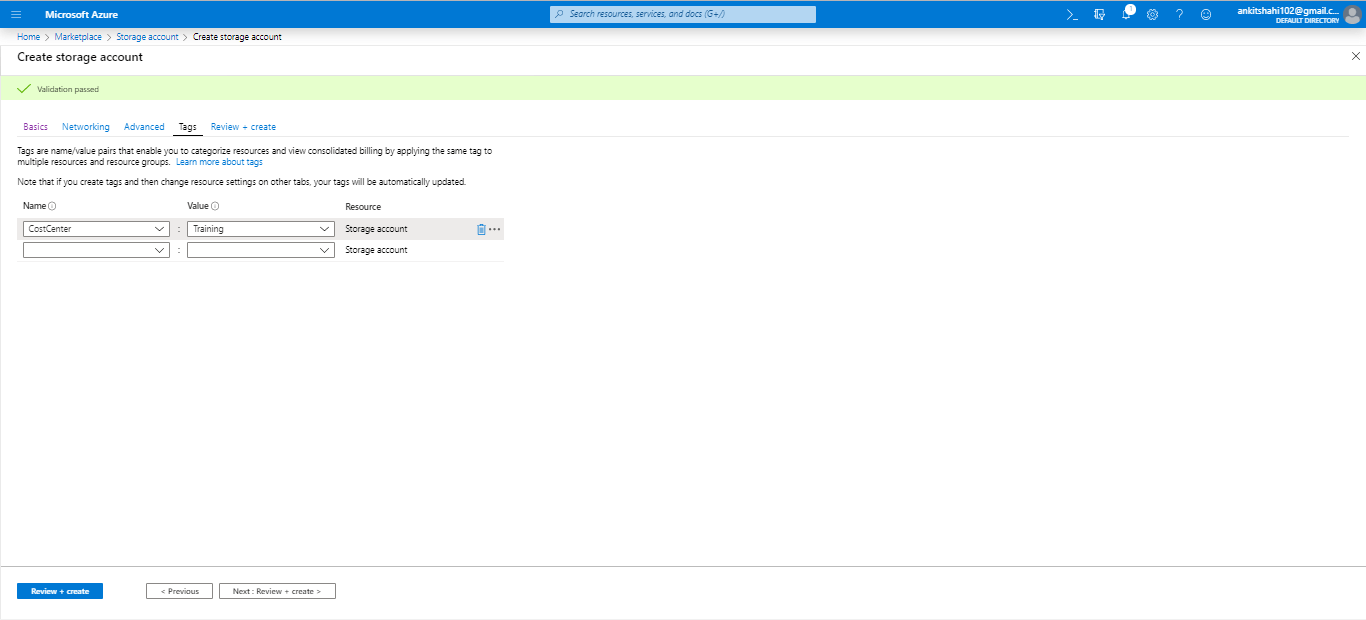

Step 7: Now, you are redirected to the Tags window, where you can provide tags to classify your resources into specific buckets. Put the name and value of the tag and click next.

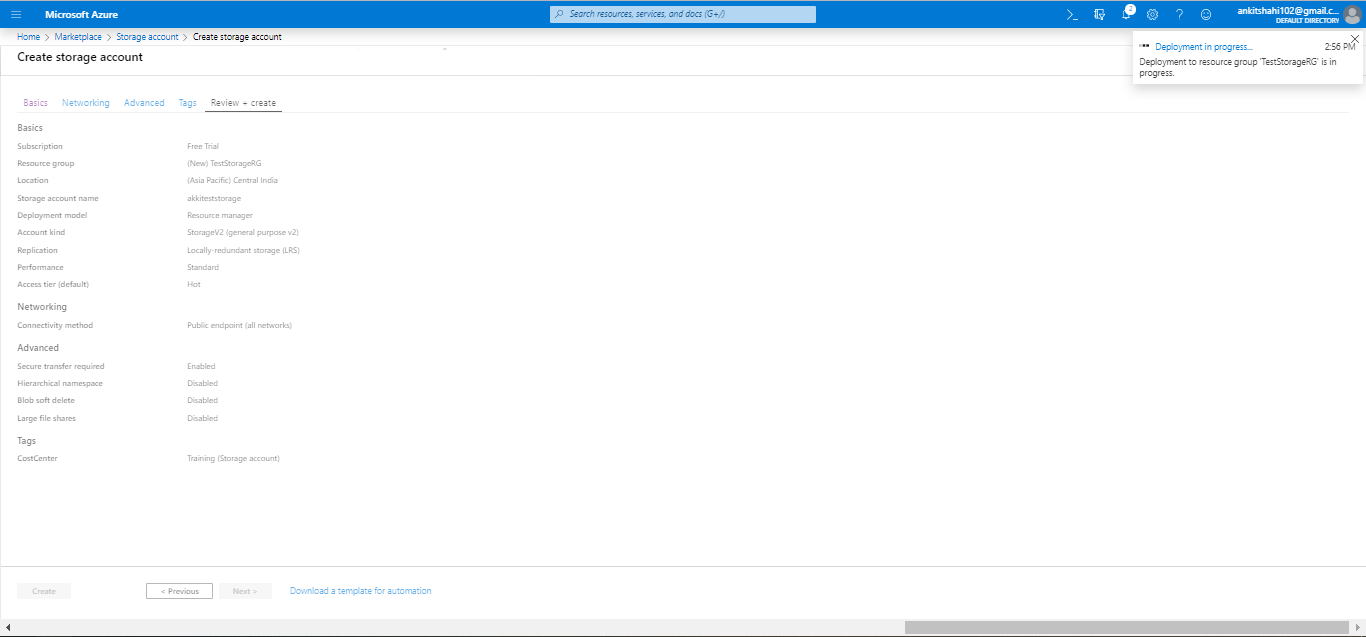

Step 8: This is the final step where the validation has been passed, and you can review all the elements that you have provided. Click on create finally.

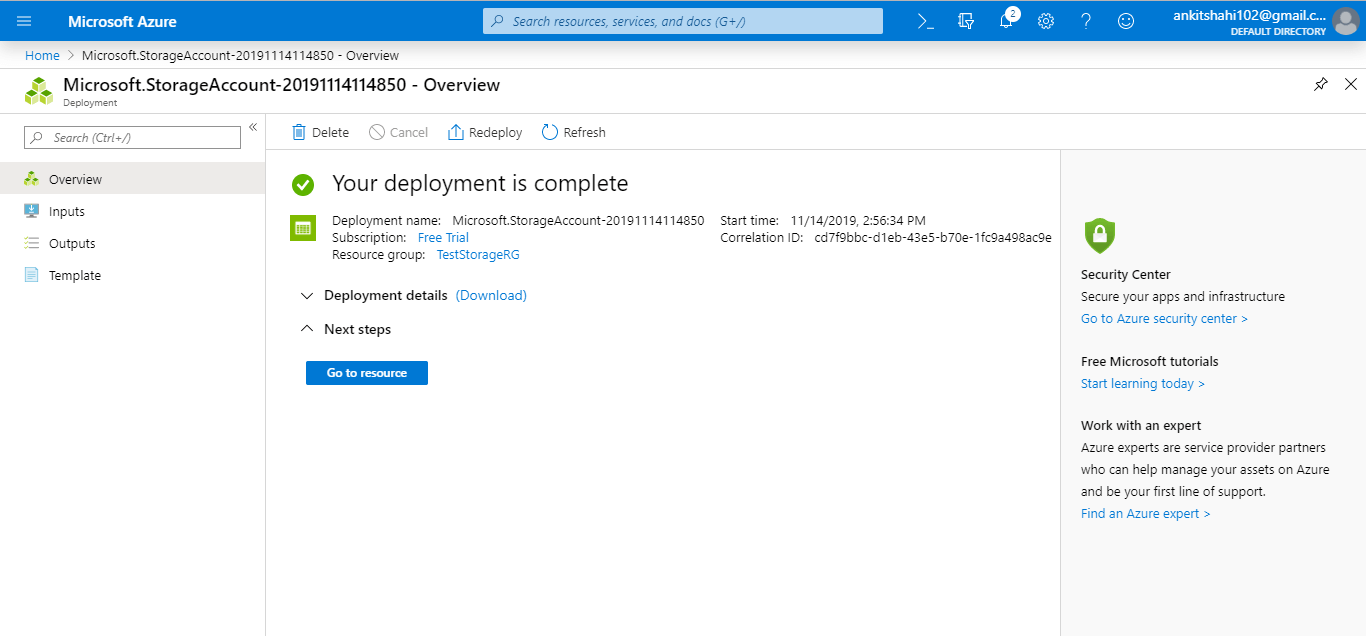

Now our storage account has been successfully created, and a window will appear with the message “Your deployment is complete”.

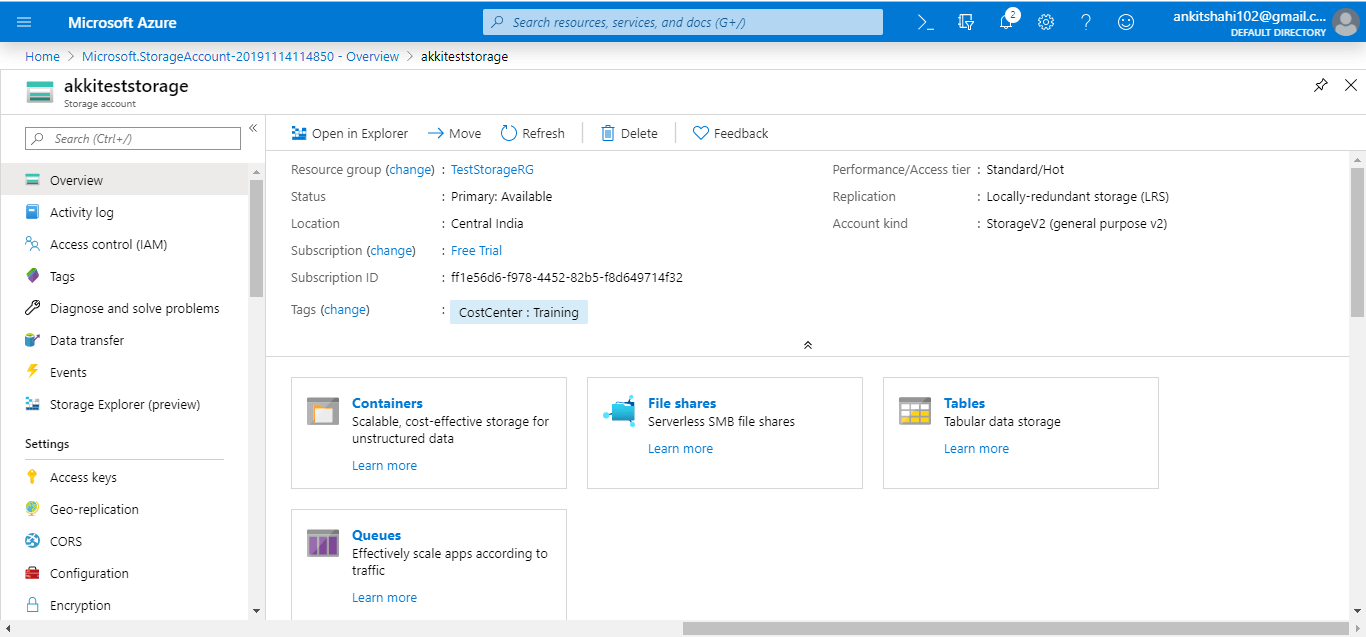

Click on “goto resource”, then the following window will appear.

You can see all the values that you have selected for different configuration setting when creating the storage account.

Let’s see some key configuration settings and key functionality of the storage account

Activity Log: We can view an activity log for every resource in Azure. It provides the record of activities that have been carried out on that particular resource. It is common for all the resources in Azure.

Access Control: Here, we can delegate access for the storage account to different users.

Tags: We can assign new tags or modify the existing tags here. We can also diagnose and solve the problems in case if we have any problems.

Events: We can subscribe to some of the events that are happening within this storage account, it can be either logic app or function. For example, a blob is created in a storage account. That event will trigger a logic app with some metadata of that blob.

Storage explorer: This is where you can explore the data that is residing in your storage account in terms of blobs, files, queues, and tables. Again there is a desktop version of this storage Explorer which you can download and connect also, but this is more of a web version of it.

Access Keys: We can use it to develop applications that will access the data within the storage account. However, we might not want to give access to this access key directly. We may wish to create SAS keys. Here, we can generate specific SAS keys for a limited period, with limited access. Then provide that SAS signature to our developers. Another way is the access keys. Access key gives blanket access. So we recommend not to give access of the access keys to anyone other than the one who created that storage account.

CORS (Cross-Origin Resource Sharing): Here, we can mention the domain name and what operations are allowed.

Configuration: If we want to change any configuration values, then there are certain things that we can’t change once the storage account is created – for example, performance type. But we can change the access tier, and secure transport required or not, the replication strategy, etc.

Encryption: Here, we can specify our own key if we want to encrypt the data within the storage account. We need to click on the check box, and we can select a key vault URI where the key is located.

(SAS) Shared access signature: Here, we can generate the SAS keys with the limited access and for the limited period, and provide that information to developers who are developing applications using the storage account. SAS is used to access data that is stored in the storage account.

Firewalls and Virtual network: Here, we can configure the network in such a way that the connections from certain virtual networks or certain IP address ranges are allowed to connect to this storage account.

And we can configure advanced threat protection and can make the storage account compatible to host a static website

Properties: Here we can see the properties related to the storage account

Locks: Here, we can apply locks on the services.

So these are the different settings we can configure, and the rest of the settings are related to different services within the storage account – for example, blob, file, table, and queue.