102

Spark Map function

In Spark, the Map passes each element of the source through a function and forms a new distributed dataset.

Example of Map function

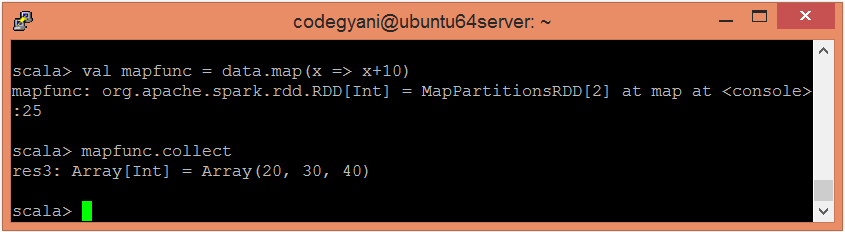

In this example, we add a constant value 10 to each element.

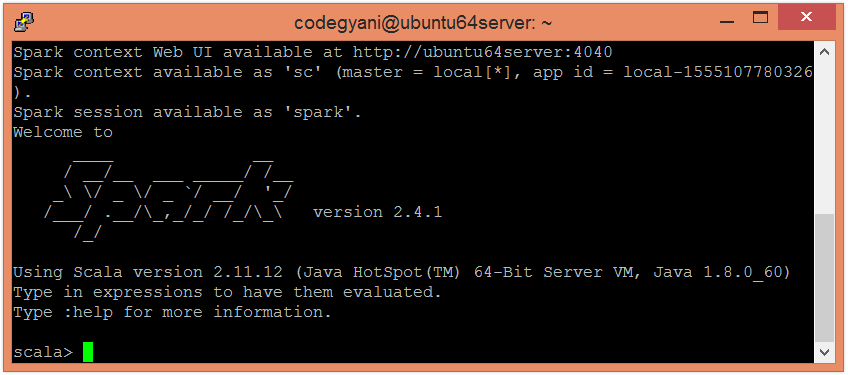

- To open the spark in Scala mode, follow the below command

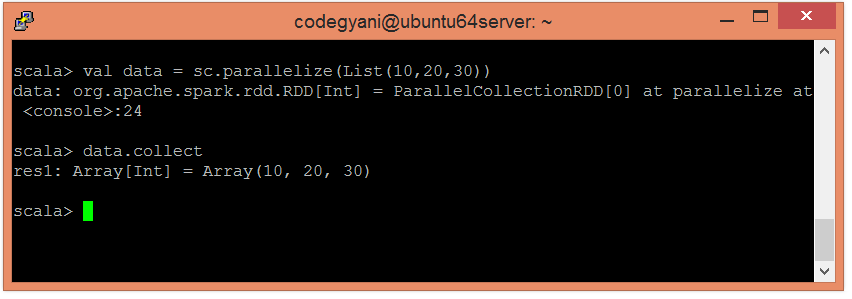

- Create an RDD using parallelized collection.

- Now, we can read the generated result by using the following command.

- Apply the map function and pass the expression required to perform.

- Now, we can read the generated result by using the following command.

Here, we got the desired output.

Next TopicSpark Filter Function